There are several reasons why spurious retransmissions may occur: Expert Insights: Causes and Consequences of Spurious Retransmissions

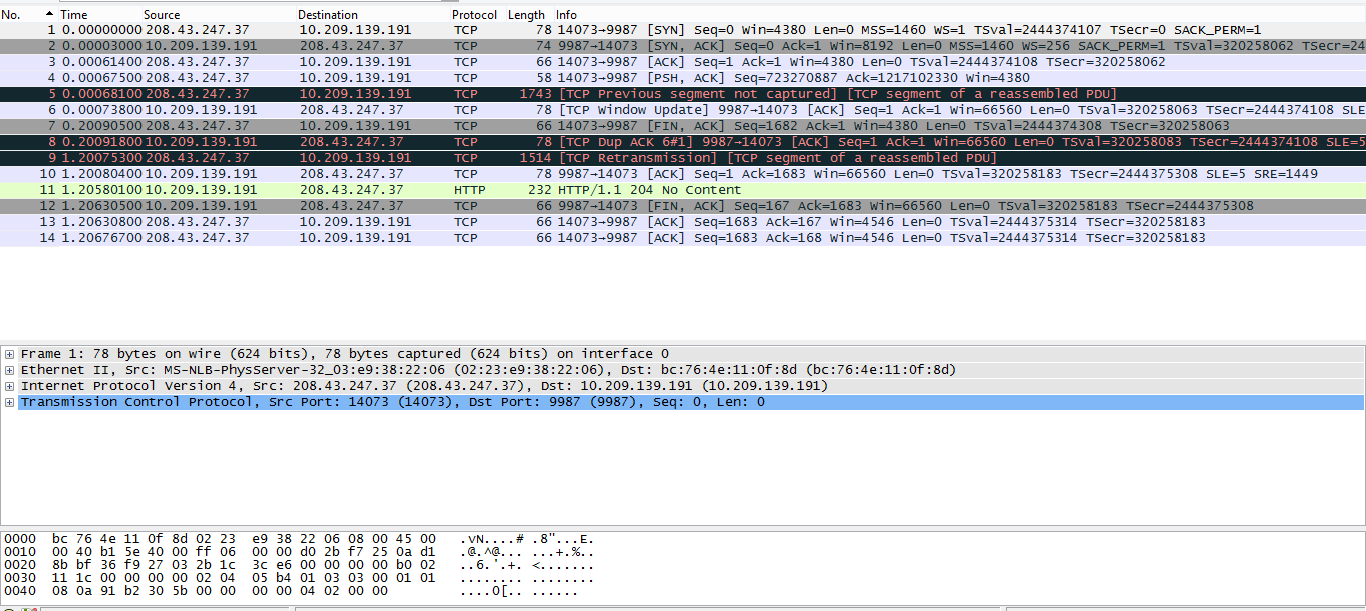

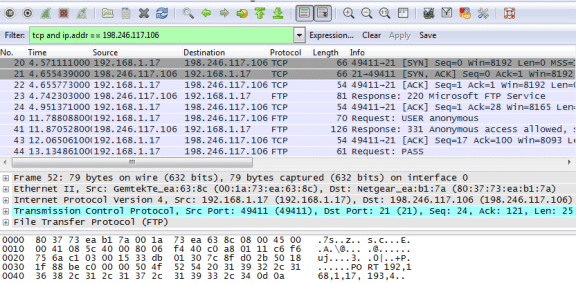

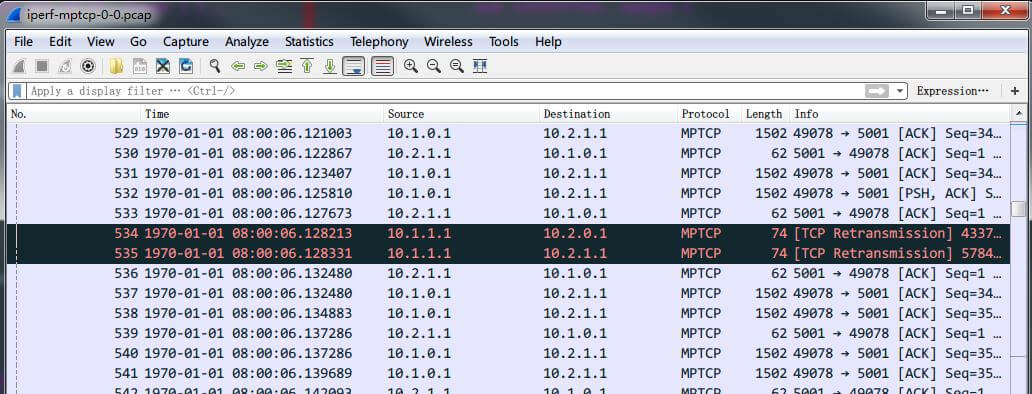

After capturing the traffic and applying the display filter mentioned above, you observe several spurious retransmissions. You notice that the transfer is slower than expected and decide to investigate the issue with Wireshark. Let's consider a real-world example where a file is being transferred between two devices using a TCP connection. This filter highlights packets that have been retransmitted despite having already been acknowledged. To identify them, you can use the Wireshark display filter _retransmission. When analyzing a network capture, you may encounter instances of spurious retransmissions. Real-World Example: Identifying TCP Spurious Retransmissions In this article, we will dive into the causes and consequences of TCP spurious retransmissions, and how to diagnose and troubleshoot them using Wireshark and other packet analysis tools. However, in some cases, packets may be retransmitted even when they have been successfully received, leading to what is known as a "spurious retransmission." This can have a negative impact on network performance and overall user experience. One of its main features is the ability to retransmit lost or unacknowledged packets. TCP (Transmission Control Protocol) is widely used in modern networking to ensure the reliable and orderly delivery of data. You can bypass limits in Squid by tweaking the configuration, but then again it is an unrealistic test.Introduction to TCP Spurious Retransmissions

Some browsers consider the proxy to be a single host but others also have different limits for the proxy (up to 15 concurrent, too). Actually, a user's browser will limit the connections to a single host to a number between 6 and 12 concurrent (depending on the browser and version). 60 new connections per second from several different hosts is more than OK, but it is unrealistic if they are coming from a single host. If you are trying to simulate user load I'm afraid you're not doing it right.

60 new connection per second from the same host is considered harmful by pretty much every network and security administrator out there and any default settings on a firewall or even on a proxy will start silently dropping your traffic. The retransmissions you're seeing are probably due to someone else in the network dropping any other SYN packets from you. I see in your capture's screenshot that it has been 2 seconds since you started the process and you've mentioned getting stuck after ~120 connections, which is a good number of new connections in 2 seconds. As symcbean pointed out, it is more efficient and it may be so evident on your example because you're quickly opening lots of new sockets. Reusing TCP ports is not a bad thing per se.

Also FIN, ACK and RST received for the earlier request.Īttaching tcp dump screenshot for reference:Ĭan you please let me know why it is using same port for the new request and re-transmitting the packet again? Is there a way to avoid port reuse? During this hanged state, I took tcpdump on server and found that it is showing "TCP port numbers reused" and start sending sync packet with same port which was used earlier and showing "TCP Retransmisson". This URL I have blocked on URL filtering engine.Īfter some requests ~120 wget got hanged in between for exactly 1 min. This URL filtering engine which will allow/block URL according to the rule.įrom client I have run a script which does 500 wget to in a loop continuously. Squid then forwards to a URL Filter which has a list of whitelists and blacklists. I have a squid proxy installed on a unix machine which is sending handling http requests coming from an trusted source. Facing issues due to TCP Port Reuse and Retransmission for HTTP traffic.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed